Summary:

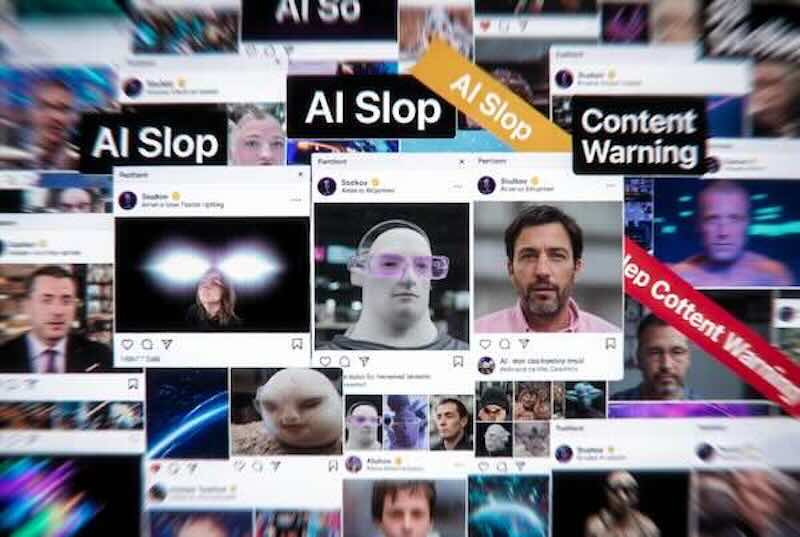

A surge of cheap, mass-produced AI images and videos is increasingly shaping what people see on social media, blurring the line between authentic posts and synthetic “filler” designed to farm clicks. The article follows users who say their feeds have become dominated by uncanny or emotionally manipulative AI content, including images that appear realistic at a glance but collapse under scrutiny. Some have started “call-out” accounts to document and mock the trend, while others report feeling exhausted and disengaging from platforms altogether. The piece outlines how engagement-driven algorithms and low-cost generative tools make it easy to publish at scale, creating incentives for spam-like production and monetisation. Platforms are shown struggling to moderate the flood consistently, relying on reporting systems and imperfect detection. As AI content becomes harder to spot, the article suggests a broader risk: weakening trust in what’s real online, and accelerating demand for stronger labelling, verification, and higher-quality curation.