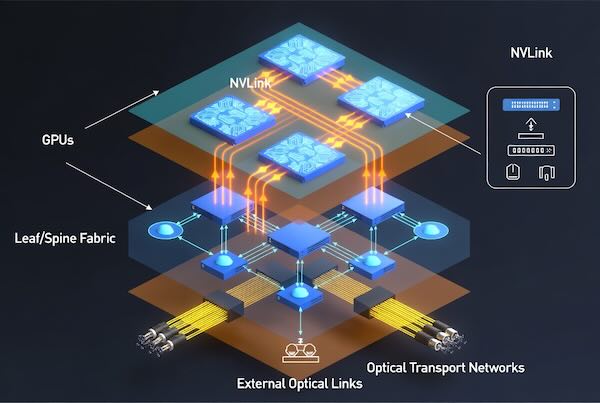

Summary: This deep-dive overview explains why interconnect technologies — which link compute, memory, and storage inside an AI datacenter — are as critical to performance and cost as the processors themselves. It outlines how interconnects span from on-die and within a rack to optical links between datacenters, and details the roles different fabrics play (compute fabric, backend leaf-spine networks, frontend connectivity, etc.). The piece frames interconnect choice in terms of performance, reach, power, and total cost, offering readers a foundational understanding of AI datacenter networking and emerging trade-offs.

Source: https://www.viksnewsletter.com/p/a-beginners-guide-to-ai-interconnects