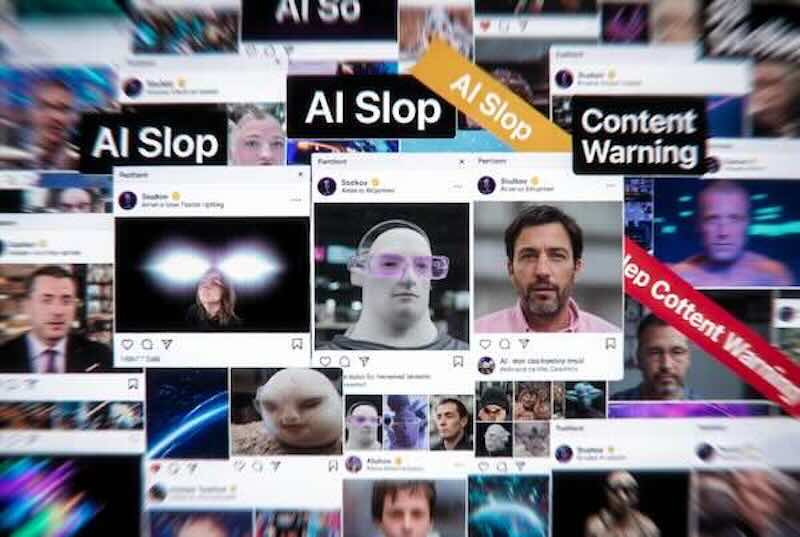

What happened: A CNET feature profiles the growing human resistance to AI slop — the torrent of low-quality, algorithmically amplified AI-generated content flooding social media, search results, and online publishing. Creators, platform engineers, scientists, and developers are all building their own defences against it.

Why it matters: A new CNET survey found 94% of US social media users believe they encounter AI-generated content while scrolling — and only 11% find it entertaining, useful, or informative. Slop is not marginal noise; it is rapidly becoming the default texture of the internet, displacing the human-made content it was originally trained on.

Wider context: AI video tools from OpenAI, Google, and Meta have made content creation so cheap that engagement-farming accounts are pulling in millions of dollars in ad revenue through sheer volume. A Kapwing report found that a third of the first 500 YouTube Shorts served to a new account are some form of AI slop, and TikTok now hosts over 1.3 billion videos labelled as AI-generated as of February 2026.

Background: The Coalition for Content Provenance and Authenticity (C2PA) is working to standardise invisible watermarking for synthetic media, but not all AI models embed content credentials, and platform enforcement remains inconsistent. LinkedIn has removed hundreds of engagement-farming groups in recent months, yet says no single signal reliably identifies fake or automated accounts.

AI Slop Is Destroying the Internet. These Are the People Fighting to Save It — CNET

Singularity Soup Take: The same platforms profiting from AI slop’s engagement lift are now tasked with cleaning it up — and the yawning gap between those two incentives is precisely why every attempt to stem the flood seems to arrive a little too late.

Key Takeaways:

- Creator Pushback: Baker and YouTuber Rosanna Pansino (21M+ followers) has launched a series recreating AI food slop videos in real life, using the direct comparison to highlight the craft and skill that generative AI replaces with a prompt click.

- Scale of the Problem: Over 1.3 billion TikTok videos are labelled AI-generated as of February 2026; new YouTube accounts are served AI slop in roughly one in three of their first 500 Shorts recommendations, according to Kapwing research.

- Detection Limits: LinkedIn has removed hundreds of engagement-farming groups in recent months but states that no single signal definitively identifies inauthentic accounts — detection relies on combinations of behavioural patterns, making it inherently imperfect.

- Watermarking Push: The C2PA coalition is working to standardise invisible watermarking for AI-generated media, but adoption is uneven — not all AI models embed content credentials, leaving significant gaps in provenance tracking.